Character

A character is any letter, number, space, punctuation mark, or symbol typed or entered on a computer. Some invisible characters are not represented on screen but are still present to modify or format text, like a tab or carriage return. When using the ASCII character set, a single character takes up one byte of storage space. For example, the phrase "Good afternoon!" is 15 characters and would take up 15 bytes of storage.

Characters on a computer, like all other data, are stored as binary data. The particular sequence of bits that make up a single character needs to be defined by a character encoding method. The two most common encoding methods are ASCII and Unicode. ASCII stores a character as a single byte, supporting 128 different letters, numbers, punctuation, and symbols in the Latin alphabet. Unicode allows a single character to be defined by one to four bytes apiece—one byte for the Latin alphabet, numbers, and punctuation; two bytes for other alphabets like Greek, Cyrillic, and Hebrew; three bytes for Chinese, Japanese, and Korean characters; and four bytes for mathematical symbols and emoji.

In addition to the vast array of letters, numbers, and symbols across the different alphabets, character encoding sets include some special characters that modify the text around them. The ASCII set includes control characters like the null character, which is used as a string terminator, a carriage return to start a new line, and a tab to insert a tab stop. Unicode specifies more groups of special characters, like word joiners and separators. These control where line breaks are allowed within and between words.

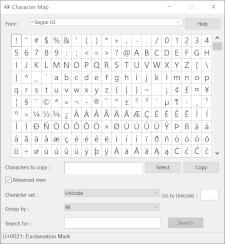

NOTE: Most operating systems include a character map application or menu that you can use to insert special characters and/or emoji.

Test Your Knowledge

Test Your Knowledge