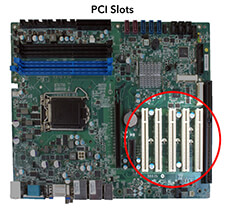

PCI

Stands for "Peripheral Component Interconnect."

PCI is a hardware bus used for adding internal components to a desktop computer. For example, a PCI card can be inserted into a PCI slot on a motherboard, providing additional I/O ports on the back of a computer.

The PCI architecture, also known as "conventional PCI," was designed by Intel and introduced in 1992. Many desktop PCs from the early 1990s to the mid-2000s had room for two to five PCI cards. Each card required an open slot on the motherboard and a removable panel on the back of the system unit. Adding PCI cards was an easy way to upgrade a computer since you could add a better video card, faster wired or wireless networking, or add new ports, like USB 2.0.

The original 32-bit, 33 MHz PCI standard supported data transfer rates of 133 megabytes per second. An upgraded 64-bit, 66 MHz standard was created a few years later and allowed for much faster data transfer rates up to 533 MHz. In 1998, IBM, HP, and Compaq introduced PCI-X (or "PCI eXtended"), which was backward compatible with PCI. The 133 MHz PCI-X interface supported data transfer rates up to 1064 MHz.

Both PCI and PCI-X were superseded by PCI Express, which was introduced in 2004.

Test Your Knowledge

Test Your Knowledge