Load Balancing

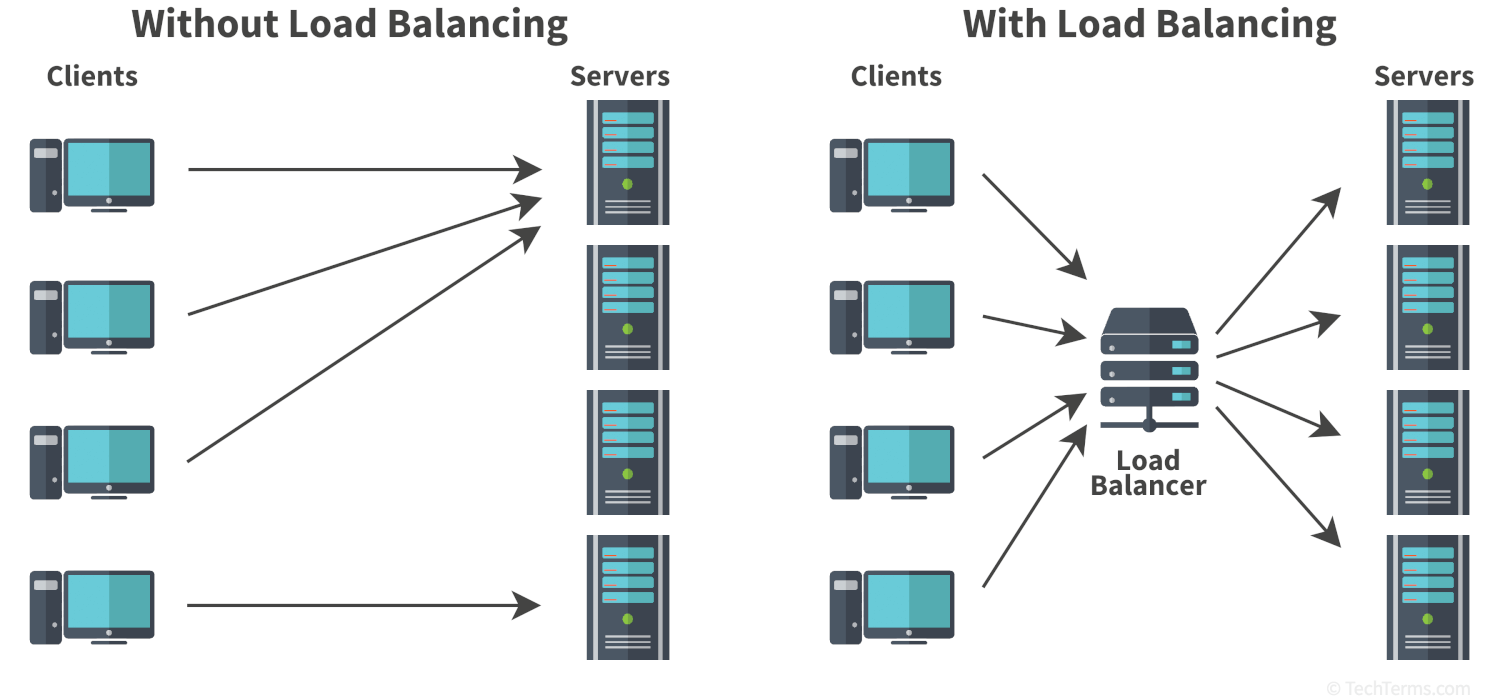

Load balancing is the practice of distributing a workload across multiple computers. It is typically performed to spread network traffic evenly between a group of web servers, but may also divide up other computational tasks like database queries and data mining. It prevents a single server from becoming overwhelmed while other servers in the cluster sit idle. By removing bottlenecks, proper load balancing helps improve the performance of servers hosting websites and web applications.

If a single web server suddenly gets five or ten times as many requests per second as it usually does, it may struggle to keep up with that demand. It may serve content slower than usual or even crash under the load. Balancing those requests between multiple servers helps keep websites and web applications available and responsive. Load balancing even works across several locations, so if one server's Internet connection is saturated, requests can go to another data center to use bandwidth more effectively.

Whether load balancing happens on a local network or in a large data center, it requires dedicated hardware or software to divide incoming traffic between servers. These hardware devices and software programs are known as load balancers. When it receives incoming traffic, a load balancer distributes requests using a load-balancing algorithm. Static algorithms distribute tasks in a predetermined pattern like a round-robin sequence, or in a randomized order. Dynamic algorithms instead consider each server's current load to route tasks to the least busy servers. Dynamic balancing across a distributed CDN may also consider geography by routing requests to the server nearest to the client, reducing latency by reducing the distance data packets need to travel.

Test Your Knowledge

Test Your Knowledge