Hexadecimal

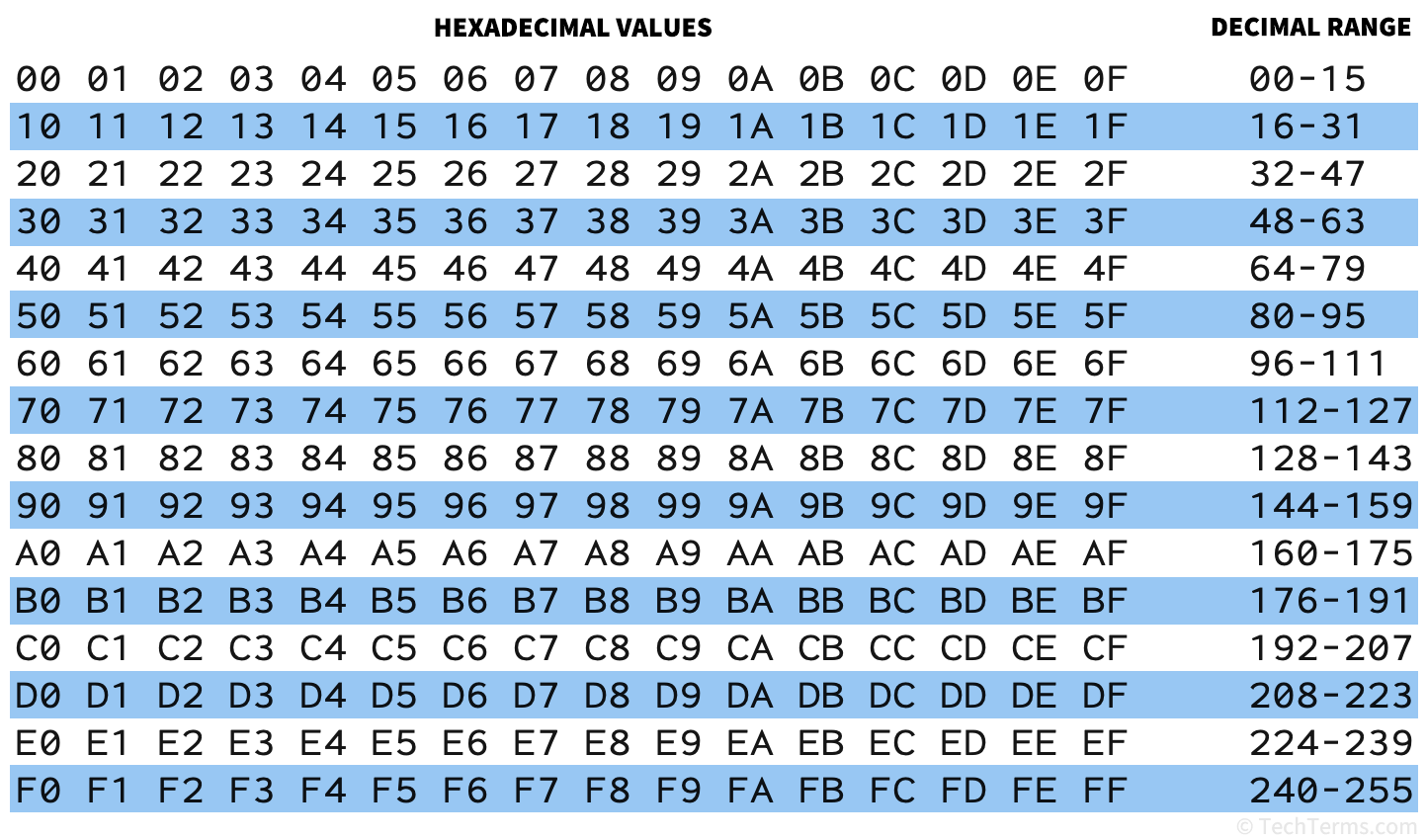

Hexadecimal is a base-16 number system used to represent binary data. Unlike the base-10 (or decimal) system that uses 10 symbols to represent numbers, hexadecimal digits use 16 symbols — the numerals "0"-"9" to represent values 0-9, and the letters "A"-"F" to represent values 10-15.

In a base-10 system, you count in multiples of 10. After each group of 10, you increase the next-higher digit: for example, counting "8-9-10-11-12" increments the second digit (the tens column) from 0 to 1 and starts the first digit (the ones column) back at 0; counting "98-99-100-101-102" increments both the second and third (the hundreds column) digits. In a base-16 system, you increment the next-higher digit every 16 values instead: for example, counting "8-9-A-B-C-D-E-F-10-11-12" does not increment the second digit until after F (the 15th value) instead of 9.

Computers use hexadecimal values to represent binary data in a shorter, human-readable format. Instead of a long string of 0s and 1s, hexadecimal values are compact and legible. Each hexadecimal digit represents one possible combination of 4 bits, also known as a nybble; for example, the hexadecimal digit B represents the specific series of bits 1011. Since a byte is 8 bits, a combination of two hexadecimal digits can represent any possible byte. Hexadecimal digits are also case-insensitive — bb74 and BB74 are the same value.

Hexadecimal numbers can also represent large numbers using fewer characters than decimal numbers. For example, two hexadecimal digits can represent any value between 0 and 255, while six hexadecimal digits can represent any value between 0 and 16,777,215. For example, the following values all represent the same number:

- Decimal: 47,988

- Hex: BB74

- Binary: 1011 1011 0111 0100

Using Hexadecimals

Since many hexadecimal numbers may be misinterpreted as either decimal numbers or words, they often include a special notation to indicate that they are hexadecimal numbers. For example, Unix and the C programming language (as well as descendants like C++ and Java) use the prefix 0x before a hexadecimal number in source code; for example, 0xBB74. Unicode characters represented by hexadecimal numbers use the prefix U+; for example, the letter "T" in Unicode is U+0054.

Web designers and graphic designers often use hexadecimal values to represent RGB colors using hexadecimal color codes. These codes start with a # symbol, followed by six hexadecimal digits — two digits representing a value between 0 and 255 for each of the red, green, and blue channels. For example, #800080 represents the color purple by mixing red and blue in equal amounts (using the hex digit 80, representing the value 128) and no green (using the hex digit 00, representing the value 0). Six hexadecimal digits can represent more than 16 million possible colors.

Test Your Knowledge

Test Your Knowledge